AI governance in action: How legal teams can control AI risk inside vendor contracts

AI adoption is accelerating across the business. New SaaS vendors are embedding generative AI into their platforms. Existing providers are switching on AI functionality by default. Procurement wants speed. The business wants innovation.

Legal is expected to approve it all – quickly – without increasing risk. This is where AI governance in contracts becomes very real. And it is also where many organizations struggle.

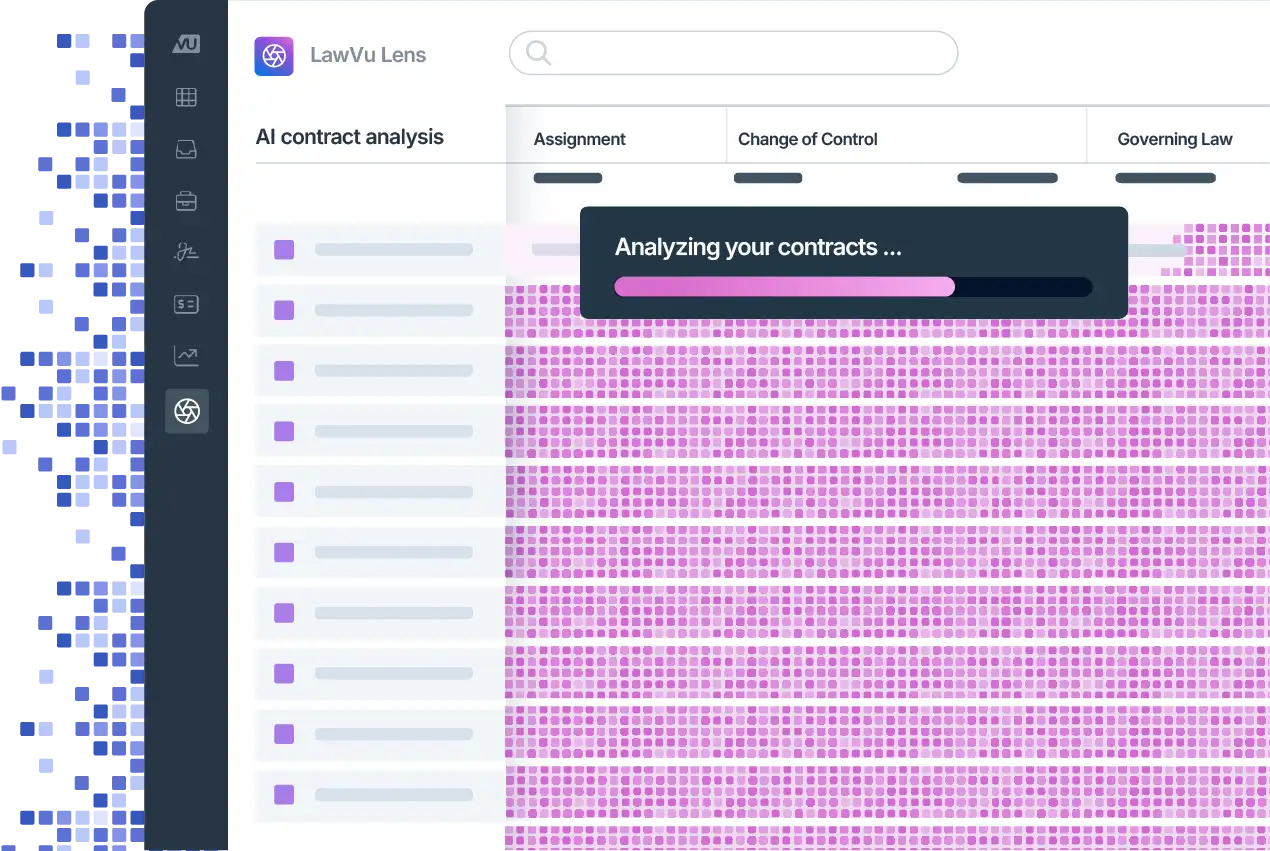

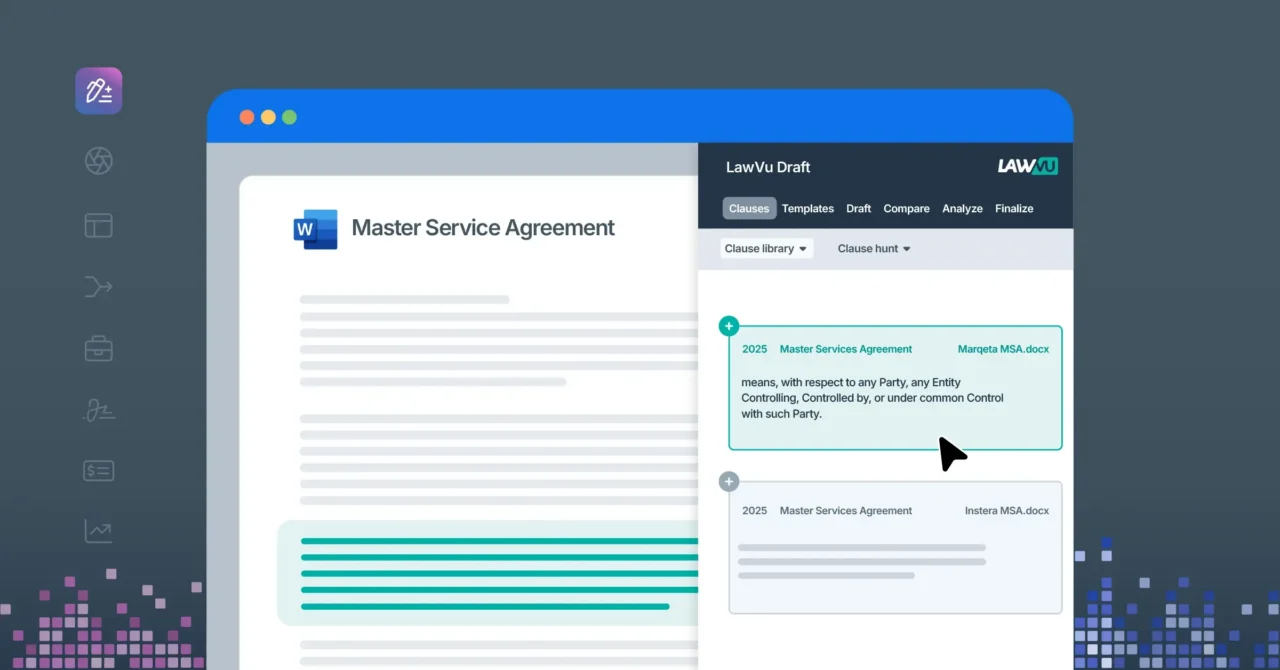

At LawVu, we built LawVu Draft specifically to address this operational gap. It sits directly inside Microsoft Word and enables in-house legal teams to review and redline contracts using governance-aligned playbooks, clause libraries, and AI-powered review workflows. In practical terms, it allows legal teams to compare vendor agreements against their approved standards, surface deviations instantly, and insert compliant language in a structured, consistent way.

When reviewing terms for AI services, that capability is no longer optional. It is essential.

The governance gap is not about policy, it’s about execution

Most organizations now have some form of AI governance framework. They may have principles around responsible AI use, data protection, human oversight, and regulatory compliance.

But policy alone does not control risk. Vendor risk is controlled at the contract level – often in the services agreement and data processing addendum. That is where data usage rights are defined. Where audit rights are negotiated and IP ownership is clarified.

AI adoption is outpacing governance enforcement. The challenge is not writing a policy. The challenge is ensuring that every AI-enabled vendor contract reflects it.

Without structure, that enforcement becomes inconsistent. Manual review makes it easy to miss embedded AI risk. Different lawyers apply different fallback positions. Junior team members may not spot subtle exposure. Negotiations stretch out while internal stakeholders are consulted repeatedly.

AI governance needs to be operationalized. That means turning governance principles into repeatable contracting rules. That is exactly where LawVu Draft supports in-house teams.

Where AI risk hides in vendor agreements

AI risk rarely appears neatly labelled as “Artificial Intelligence”. Instead, it is embedded in language that looks familiar:

A vendor may reserve the right to “use customer data to improve its services.”

Inputs and outputs may be reviewed for “abuse monitoring.”

The customer grants a broad licence to data submitted through the platform.

Sub processors may be appointed at the vendor’s discretion.

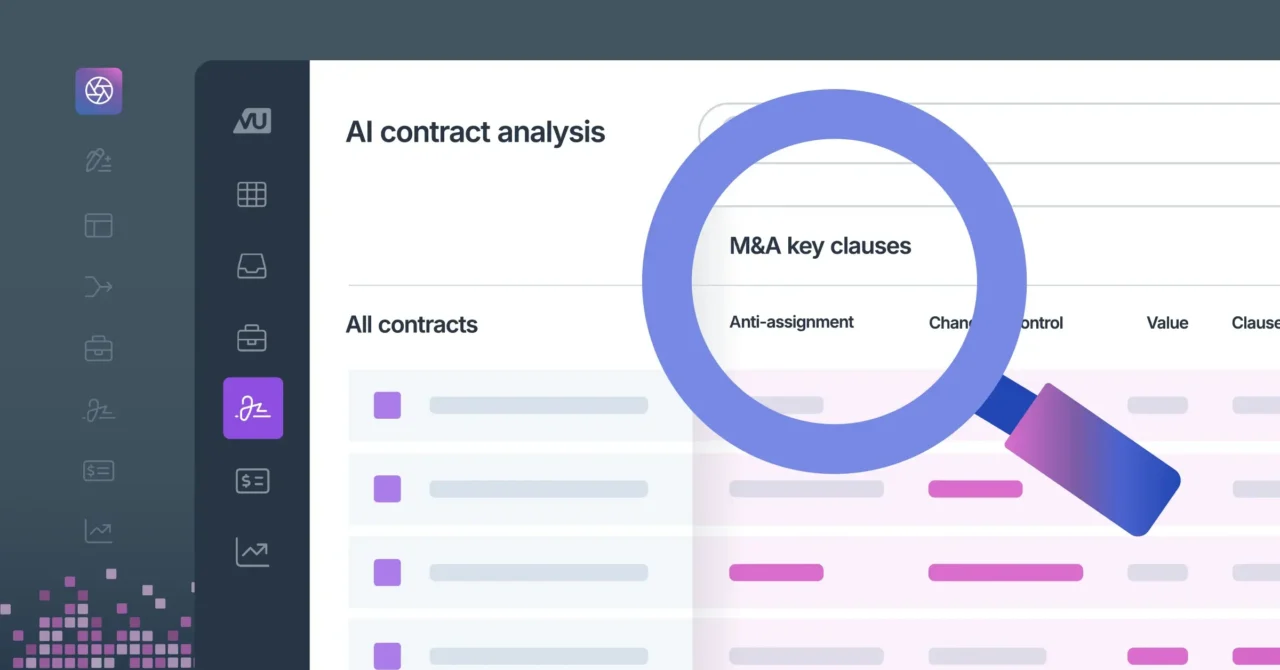

None of those clauses necessarily mention AI. But each one can carry significant AI vendor risk. The key governance questions usually center around:

- Is customer data being used for model training?

- Are outputs grounded in customer data or public sources?

- Is there meaningful transparency around sub processors?

- Are there clear audit rights?

- Who owns the inputs and outputs?

These issues are often buried inside broader SaaS language. Under time pressure, it is easy to conduct a surface-level review. Risk is not always explicitly labelled as “AI,” which makes it even harder to identify consistently. This is why structured, playbook-driven review is so powerful.

A realistic scenario: Approving a generative AI SaaS vendor

Consider a common scenario. The business wants to onboard a new SaaS vendor that uses generative AI to process customer data. The functionality looks valuable. The team is excited. Procurement is keen to move quickly.

The vendor contract includes broad data usage rights. There is limited transparency around model training. Audit rights are narrow.

Legal must identify AI-related clauses quickly, compare them against approved governance standards, insert compliant language, and ensure consistency across similar contracts.

If this review is handled manually, the process is slow. Junior lawyers may miss subtle exposure. Internal stakeholders such as privacy, security, and IT are repeatedly consulted because positions are not clearly operationalized. Negotiations stall while fallback language is drafted from scratch.

Now imagine approaching that same contract with a governance-aligned playbook already built into your review process.

Using LawVu Draft inside Microsoft Word, the legal team can apply a predefined AI governance playbook to the vendor agreement. The tool compares the contract to the organization’s approved positions and surfaces deviations clearly. Non-compliant language is highlighted. Missing protections are flagged. Approved AI clauses can be inserted directly into the document, preserving formatting and structure.

The lawyer remains the decision-maker. The tool does not provide legal advice. It compares the contract against the rules the organization has already agreed.

That distinction matters. Governance remains human-led. But enforcement becomes faster and more consistent.

Translating AI governance into repeatable contract controls

One of the most important aspects of AI governance is cross-functional alignment. Legal does not set AI policy alone. Data protection officers, information security leaders, IT, procurement, and compliance teams are all involved.

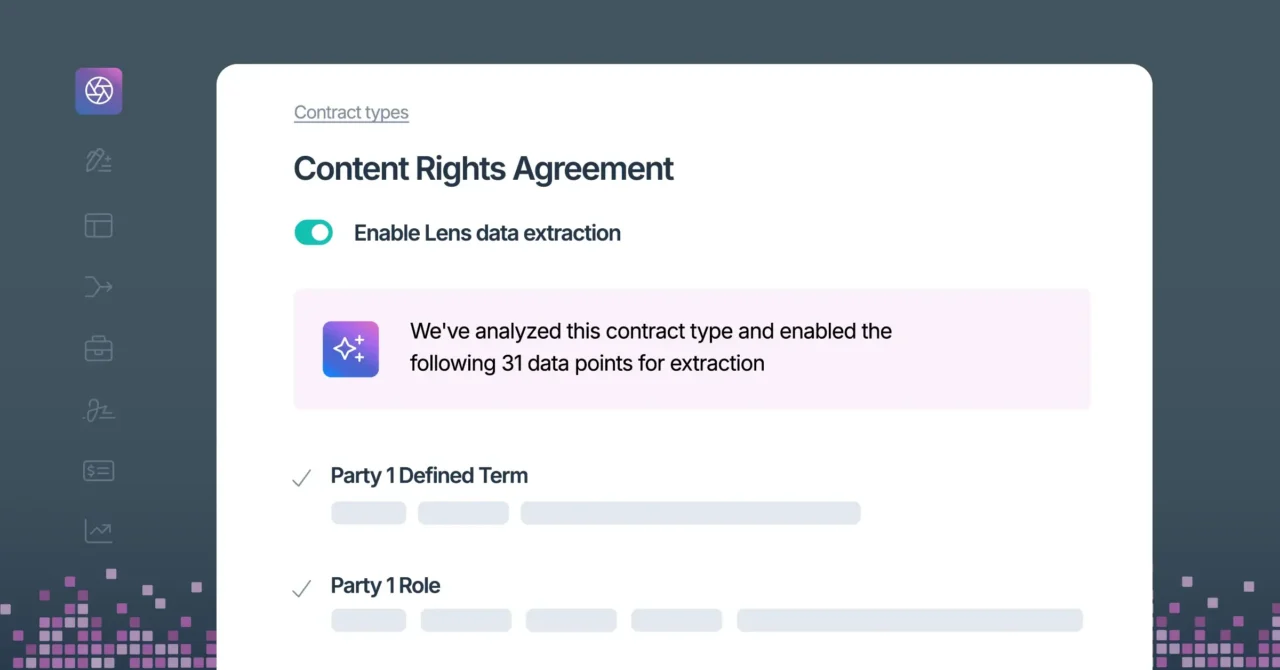

LawVu Draft supports that collaboration by enabling legal teams to convert agreed governance principles into operational playbooks. Once the organization has aligned on positions such as “no training on our data” or “human-in-the-loop review required,” those principles can be embedded into clause libraries and review rules. From that point forward, every vendor contract can be tested against the same risk profile.

This creates guardrails during drafting and review. It strengthens data governance and confidentiality protections. It standardizes language around IP ownership of inputs and outputs. It supports consistent positions on security controls, audit rights, and sub processor transparency.

Most importantly, it reduces reliance on institutional memory. Governance becomes systematized rather than personality driven.

Why this changes the business conversation

AI compliance in SaaS agreements is not just a legal issue. It is a business enabler.

When review processes are structured and consistent, vendor onboarding becomes faster. Negotiations are more predictable because fallback positions are pre-approved. Internal escalations decrease because the governance framework has already been translated into contract language.

In my own experience using LawVu Draft, straightforward agreements that previously required careful line-by-line review can now be assessed in seconds when aligned to a defined playbook. Key issues are surfaced clearly at the top of the review. I am not scrolling through pages trying to identify hidden risk. That time saving is significant across a contract portfolio.

And that reclaimed time matters. It allows legal to focus on higher-level AI risk management questions.

- Is the proposed AI use case low, medium, or high risk?

- Does it involve automated decision-making?

- Does it impact individuals?

- Are additional mitigations required beyond contract protections?

Instead of being buried in redlines, the legal team can engage in strategic governance.

From AI policy to enforceable practice

AI governance in contracts is not theoretical. It is practical. It is operational. It is repeatable.

It requires legal teams to:

- Identify AI-related risk quickly

- Apply consistent standards

- Insert approved protections confidently

- Maintain a standardized risk profile across vendors

LawVu Draft enables that inside the environment where lawyers already work – Microsoft Word. It combines playbooks, clause libraries, and AI-powered review to help teams create and redline contracts efficiently. And because it is integrated into the wider LawVu operating system, it leverages your organization’s real contract history and preferred positions to strengthen every review.

AI governance is not achieved by writing a policy. It is achieved by enforcing it consistently, contract by contract.

The real question for legal leaders is not whether AI is being adopted. It already is. The question is whether your governance standards are being applied every time an AI-enabled vendor contract crosses your desk.

See Contracts Intelligence at work with LawVu Draft and how it helps legal teams turn AI governance into consistent, contract-level action and move faster, with confidence, when the stakes are high.

Turn contracts into clarity